Traffic Shaping and Bandwidth Management For Novell NetWare

|

|||||||

|

TSE 3.2 Available Now TSE 3.2 offers the following valuable capabilities:

The TSE is useful for virtually any application where you need to limit, monitor, or filter the flow of network traffic. Rate driven thresholds allow you to accommodate traffic bursts while managing long term high volume connections. Extremely simple TSE configurations effectively impose download quotas for hyperactive downloaders, while not punishing the average user. You can kiss headaches like MP3's, game demos, and movie trailers good-bye. Scales to a Million Connections A test of the TSE proved it is capable of managing in excess of 1,000,000 simultaneous connections, applying an independent data rate to each on single AMD K6-III / 500 with 256 MB RAM running off the shelf NetWare 5! Test systems using packet generation software to flood the server with 10,000 to 20,000 packets a second. The TSE found the appropriate data rate for each one - with less than 20% CPU load. Who needs a million connections? Well, nobody... but if the TSE can handle 1,000,000 connections it can manage real world situations like yours. Packet Capture & Continuous Recording TSE 3.2 adds powerful packet capture capabilities allowing you to

capture traffic in / out of any interfaces on a NetWare server. Used

in conjunction with rules, you can have the TSE record just the traffic you

are interested in. The TSE is capable of continuous recording of

traffic to disk, including rolling of packet capture files, without skipping

a single packet. This makes the TSE a powerful tool for troubleshooting

problems. For developers, you can record all traffic out all interfaces

simultaneously, in a single packet capture file, to preserve both the order

and timing of the packets. Its great for profiling applications as

well. The buffering can be increated to use as much as 128 MB or RAM

to capture even the most violent spikes in traffic. Using CRON, you

can roll the packet capture files automatically to continuously record and

archive any desired traffic. Connection Import / Export You can use Address Masks to automatically build a connection table entry with a default data rate. Then export, modify, and import the data rates for each connection individually. You determine what constitutes a connection, so it could be traffic for a given workstation or host, a TCP connection, a TCP port, or any combination thereof. If you can use a text editor, you can assign each workstation a distinct data rate. And this can be done for any number of workstations. Connection Auditing / Logging Normally the TSE will create connection table entries and then remove them as they age out in accordance with the Time To Live you set on the connection. During the life of a connection, the total number of bytes ( rolls over at 256TB ) and packets ( rolls over at 4G packets ) is accumulated. This information was just throw away by the TSE as connections aged out. The Connection Audit process saves this information into a log by default. each entry is time and date stamped, shows the address information , as well as a count of bytes and frames passed. This information can easily be used as the basis for auditing internet usage, hosts / ports accessed, or for diagnosis of connection masks. The auditing can be enabled / disabled on a mask by mask basis, and the entire auditing function can be disabled interactively. More Stable, More Efficient TSE 3.2 is by far the most stable and reliable build of the TSE. The TSE performs routine integrity checks of all critical data structures, implements semaphore protection on all dynamic data, and implements corrective and protective measures aimed at making sure the TSE is the strongest link in the chain. It will gracefully unload even under the most severe conditions, and offers improved control over memory usage. It is also speedier than before. The overhead of using the TSE has been measured on a P III / 500 at less than 10 microseconds per frame Easier Address Masks The Configuration Tool now has drop down lists of standard address masks for various combinations of network, node, and port. Just pick the source and destination template you want and click OK.

Priority Queuing Priority queues allow you to set a maximum bandwidth capacity which corresponds with available bandwidth delivered by an ISP's connection. The traffic entering the queue is assigned a priority level by rules ( and eventually a more easily managed means ) ensuring that fluff traffic consumes bandwidth only after high priority traffic has been serviced. You can create several priority queues each with several priority levels as needed. The behavior of the queue is determined by configurable values for each priority level allowing you to determine how strict or elastic the prioritization is. In addition, the relative priority of each level can be controlled to a very fine degree. TOS & DiffServ Tagging As of NetWare 5.1 + SP 2a, NetWare didn't support QoS tagging as part of its IP stack. Later version of TCPIP support setting a default tag for all frames. I.e. the outbound traffic from TCPIP is tagged uniformly with the same tag. So there is no differentiation between services based on user defined priorities. Why is this important? Rather than make your server do the queuing and prioritization, TOS and DiffServ tagging allow you to assign a priority value which is then interpreted by QoS aware switches and routers to which the server is connected. TSE Beta 2 allows you to tag outgoing frames in the same way you assign data rates. Any traffic you can construct a rule to identify can be tagged with any desired tag value - as a rule action. This capability overrides the "default" value assigned in newer versions of TCPIP. Non IP packets are protected from being accidentally tagged. ( For IPsec, the TSE's tagging is only possible on the outer unencrypted IP envelopes of encrypted VPN packets - as the TSE has no way to decrypt the encrypted payload, retag it, and re-encrypt. ) Web Status The TSE can optionally create / update an HTML formatted status page which can be pointed into the documents folder of a web server running on the server running the TSE. This allows you to view the same information generally available from the console. The output is rather drab, however as a proof of concept it is OK. You can also output the status information as a java script library, which can be used to extract whatever information you want to create custom pages. ( We will be looking at an XML method in the future. ) Identifies NDS objects for audit logs and web status The TSE now integrates with our MAC2USER NDS name resolver. The MAC2USER agent searches NDS to identify the most recent association between an IP or MAC address and the NDS user object currently logged in from that address. Within a couple minutes of authentication, MAC2USER knows and will provide the NDS object name to the TSE for use in Audit Logs, web status pages, etc. You can audit / monitor traffic down to the actual NDS user generating the traffic rather than IP addresses or DNS names which only identify the workstation. Please e-mail me if you want to try this! We need testers! MAC2USER even works for networks still using IPX. If you are using Novell's DHCP 3.x service, MAC2USER will figure out which user is using which IP address by using the node portion of the IPX address and matching it up with DHCP or ZEN information. This is a marvelous solution for places still using IPX and who need to audit IP traffic usage. MAC2USER integrates with Novell's DHCP 3.x service as well as ZEN for Desktops workstation registration agent. MAC2USER will hunt down associations between user and MAC or IP address using DHCP or ZEN information in NDS. The most recent User ID is returned for a given address, ensuring that activity is attached to the proper user, even on machines used by multiple users. Provided they login to NDS, we can tell who they are. Packet Capture compatible with Ethereal Protocol Analyzer The TSE allows you to use rule actions to capture any traffic flowing through your NetWare server. The TSE's high performance packet capture engine can capture thousands of packets a second and dump them into SNOOP ( RFC-1761 ) files for analysis by many protocol analyzers, including the popular, and free, open source sniffer Ethereal. Since packet capture can be triggered by rules, you can capture exactly the packets you want. The TSE is the perfect tool for simultaneous capture of traffic on multiple interfaces. It makes troubleshooting Novell's NAT, routing, and Border Manager a snap, as you see exactly what the server sees, without requiring the guesswork of aligning captures from multiple sniffers. Advanced Queue Management Beta 2 of the TSE offered no configurable controls over queue control. When more than 8 seconds of traffic is queued, the TSE can optionally drop the excess traffic using the "drop tail" queuing discipline. For most applications, an 8 second queue depth is far too long, and for priority queuing, there is not means to differentiate between high latency and low latency traffic. For example, it is stupid to send 8 second old packets which are part of a streaming feed. TSE 3.2 implements a programmable AQM algorithm which can umplement a variary of congection control schemes including instantaneous versions of "Drop Tail" aka DT, RED, Gentle RED, exponential GRED. You can create custom drop probability curve to implement any arbitrary queue discipline as a function of queue depth. With Drop Tail, when the maximum queue depth is reached, any new packets entering the queue are dropped. This is still the most used method on core routers in the Internet. Gentle RED Instantaneous is a modified version of the RED queuing discipline developed at SPRINT Labs as a replacement for DT on their core routers. GRED-I handles multiple conversations more fairly, and is computationally lighter weight than more esoteric methods such as BLUE, GREEN, and other colorful methods. Several theoretical studies of RED, WRED, and DT show that RED and WRED are very sensitive to parameter settings and provide marginal improvements over DT when tuned optimally. However these methods can significantly degrade performance when slightly out of tune. GRED-I has a very shallow sloped, linear drop response to the instantaneous depth of the queue. Perhaps a 0-5% drop rate over the depth of the queue. Exponential GRED-I uses an exponential function which provides a smooth transition between a GRED-I like linear drop function and DT like behavior.

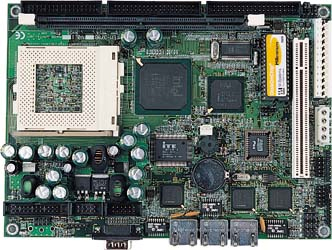

TSE API and Callback Interface The TSE exposes both an event oriented callback API as well as a simplified bandwidth management API. Both API's are simple and relatively efficient. The callback API allows 3rd party developers to register for TSE events. This API is somewhat bi-directional, as the TSE can also generate events which serve to request information of the external application. For example, to resolve addresses to names, or request policy information. The bandwidth management API allows for the discrete modification and creation of static and dynamic configuration information including modification and population of the connection table. This, combined with the ability of the TSE to perform an arbitrary action for any packets belonging to a given connection, allows the external program complete control over the bandwidth of the server and all traffic flowing through it. This functionality is presented in a high level API and serves the basis for the construction of "policy agents" which are notified when new connections are created, and subsequently enforce policies by manipulating the TSE's connection tables. Improved Stochastic Priority Queing The priority queuing capabilities, introduced in Beta 2, elicited a lot of feedback. Beta 3 and TSE 3.2 improves the efficiency and accuracy of the priority queuing with some improvements to the stochastic priority queuing algorithm. These improvements allow the TSE to more quickly and accurately balance the packets emitted from the queue. In addition, a modified version of the GRED algorithm is available on each priority level as well as for the QoS FIFO which is fed by the priority queue. These improvements allow for greater utilization while avoiding congestion, flutter, and retransmits. Per Connection Actions Perhaps the biggest single improvement over Beta 2, TSE 3.2 allows each connection table entry to specify an action, much like the Then Clause of a rule. This allows each connection entry to explicitly specify what should happen to the traffic next. Allowed actions include Drop, Forward, rate limit, priority queue, audit, as well as rule flow actions like Next Rule, or jumping to a specific rule. Essentially the connection entry becomes a rule in the rule set. This greatly simplifies the construction of rule sets, allows for easy handling of exceptions, and provides a power to the TSE API. A default action can be specified in address masks via the configuration tool. These actions can also be exported / imported, allowing them to be used from ASCII connection tables. QoS Sharing In Beta 2, each connection is associated with a QoS node. This QoS node serves as a place for collecting statistics, as well as acting as a dedicated FIFO queue for that connections traffic when rate limiting. It is, however, often desirable for a set of arbitrary connections to feed traffic to a dynamically created FIFO. ( Consider accommodating the rate limiting of a group of users. ) TSE 3.2 offers a mechanism where a connection can be created so that it "shares" the QoS node of an already existing connection. The primary connection can use a text label as an address, making it easy to manage these arbitrary groupings in connection import files. The TSE API accommodates this sharing as well. As connections age out, the TSE manages an "in use count" for dynamically created QoS nodes, and when it is no longer in use, the underlying QoS node is removed, triggering the audit process to record information. ( This also means that there is only one "bucket" of statistics accumulated for the several connections. So auditing would be necessary to capture more detailed information. ) Since these arbitrary groupings can cross key groups, or even key types, they are useful for a variety of other applications as well. If used in conjunction with auditing, they facilitate arbitrary summarization of related connections. Full SMP Support In Beta 2, the TSE did not make use of additional processors. In addition, there were diffuse problems with running Beta 2 on SMP machines where the second CPU would handle network interrupts. To address this, and to make the TSE more valuable to NetWare 6, which itself finally gives us a reason to do SMP in the first place, a lot of work had to be done. Under Beta 3 and now TSE 3.2 all TSE foreground processes can be offloaded to additional CPU's. Since the TSE makes use of several shared data structures, a major challenge of the development effort was to provide SMP support without eviscerating the performance of the TSE. We totally redesigned the TSE's memory management, re-written linked list management code, as well as optimization of foreground tasks, has yielded a TSE which is faster on both uni-processor and multi-processor platforms. The Worlds Fastest TSE 3.2 is the worlds fastest software based bandwidth manager, based on the additional overhead required above and beyond normal handling of packets in the OS to perform routing. On a PII/450 the additional per packet latency introduced by a robust TSE configuration was 1.94 microseconds per packet. This is the amount of additional processing time required to run the rule set againt the packet, enqueue the packet, dequeue the packet, and send it on its way. On PIII and P4 systems, the additional latency is barely measurable and typically far less than a microsecond. The Worlds Smallest Why is fast important? Because you can use a cheaper

system to do the same work! Duh! Using off the shelf components,

and a stripped down installation of NetWare 4.2, and later on 5.1, we

constructed the worlds smallest bandwidth manager! Its based

on a 5.25" form factor SBC with 3 10/100TX NIC's.

We could have use an extremely tiny 3.5" form factor platform about

the size of a paperback novel, but it only had 2 NIC's. The

above platform has 3 Intel 10/100 NICS. Its a beautiful thing! |

|||||||

| Last Modified 09-30-2004 |

|

||||||